Automated driving systems (ADS) must demonstrate that they are sufficiently safe. In a traditional car operated by a driver, safety is demonstrated by showing that the reliability of the vehicle’s components is sufficiently high, such that the driver can control the vehicle safely. For an ADS, this safety concept is not sufficient, as the system – and not the driver – is responsible to monitor and control the environment as well as the vehicle.

A critical part in demonstrating the reliability of an ADS is the system’s perception of the environment provided by sensors and sensor fusion. In today’s ADS, typically camera, lidar and radar sensors are installed, each of which has different strengths and weaknesses – and all of them perceive the environment only with measurement uncertainty. Hence it is essential to be able to specify requirements for the reliability of each individual sensor’s perception and to quantify that reliability through data. Our client is collecting significant amount of data from real test drives, and needed a methodology to make the best use of this data for reliability quantification and demonstration.

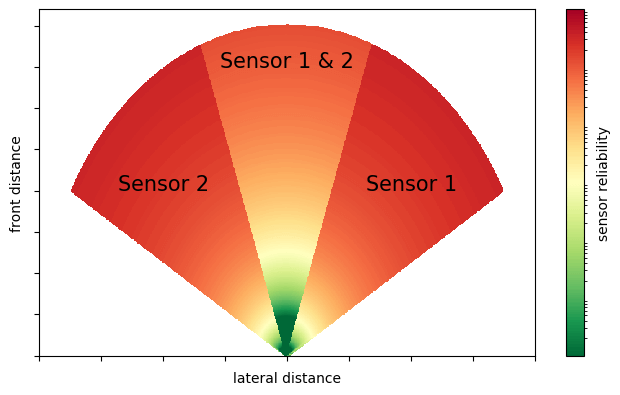

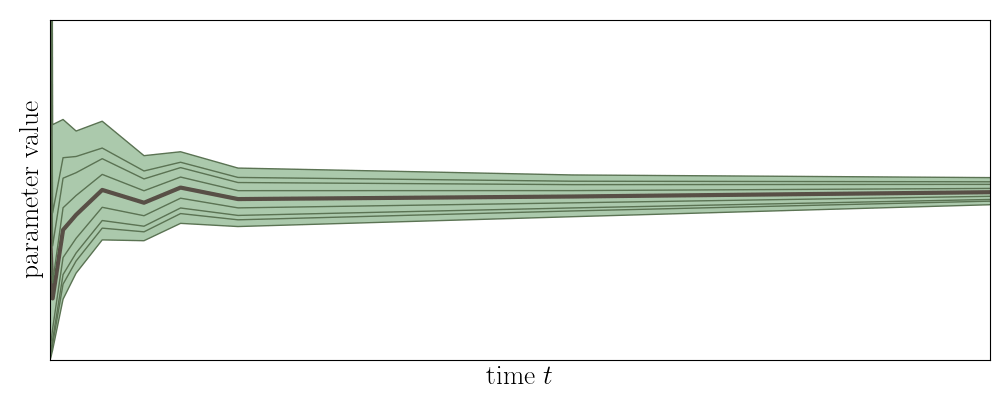

We developed a framework for specifying the requirements to the reliability of each individual sensor’s perception and for learning this reliability from data collected through a limited number of test drives via machine learning. The framework accounts for the redundancy among the sensors that can be exploited in a sensor fusion. It can handle data that do not involve a ground truth or contain only imperfect ground truths. To support the data acquisition, which is costly, the framework also allows a dynamic planning of test drives.

+49 (0)89 21709083