Even though most of our engineering models appearing in practice are not additive models, it is still good to know about these models: Additive models have useful statistical properties and can be applied for machine learning (learning models from data) if the focus is on generating interpretable models (a property that many other machine learning techniques lack). Linear models are a special case of additive models.

Definition

Let $h:\mathbf{X}\to Y$ be a model that maps the $M$-dimensional vector $\mathbf{X}$ of uncertain and statistically independent model input parameters $\{X_1,X_2,\ldots,X_M\}$ to scalar model output $Y$. If $h(\cdot)$ is an additive model, it can be expressed in terms of the linear combination:

$$

h(\mathbf{X}) = h_1(X_1) + h_2(X_2) + \ldots + h_M(X_M)\;,

$$ where the $h_i(X_i)$ are arbitrary functions that depend on $X_i$ alone.

Variance decomposition of additive models

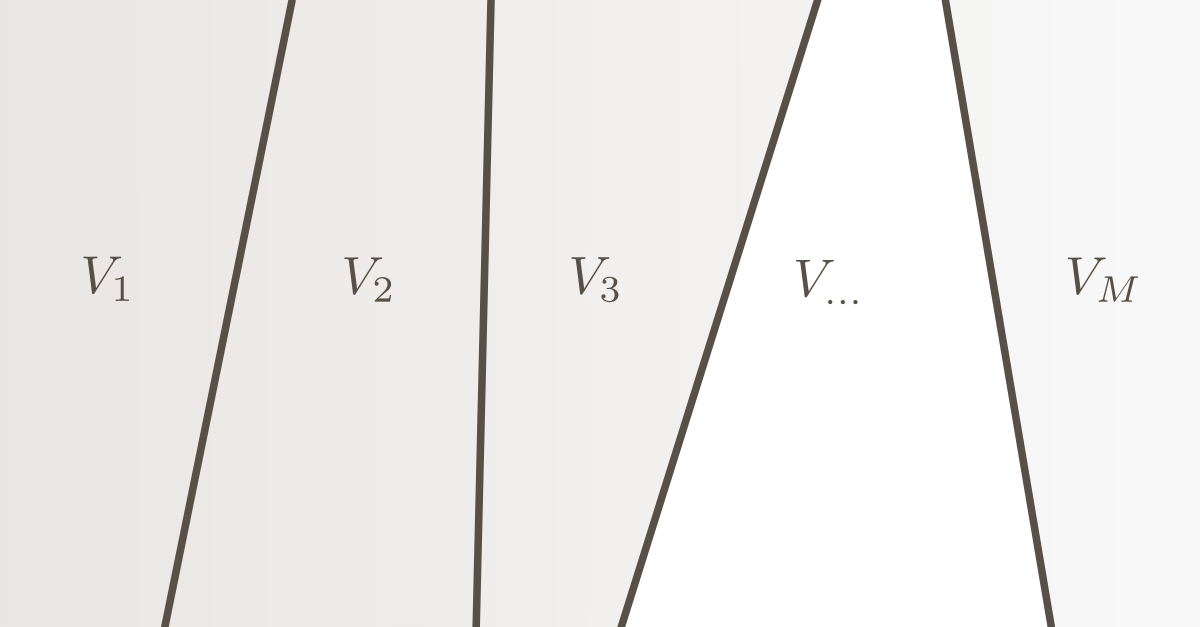

It directly follows from the definition above that the variance of an additive model can be decomposed as the sum of the first-order effects $V_i$:

$$

\operatorname{Var}\left[Y\right] =V_1 + V_2 + \ldots + V_M\;.

$$

Illustrative examples

Examples of additive models are:

$$

Y = X_1 + X_2 + X_3 + X_4\;,

$$ $$

Y = X_1^2 + X_2^4 + \sqrt{X_3} + \sin(X_4)\;.

$$

The following model is NOT an additive model:

$$

Y = X_1\cdot X_2 + X_3\cdot X_4\;.

$$ ■